GeneCIS:

A Benchmark for General Conditional Image Similarity

A Benchmark for General Conditional Image Similarity

In CVPR 2023

1 VGG, University of Oxford

2 FAIR, Meta AI

Abstract

We argue that there are many notions of ‘similarity’ and

that models, like humans, should be able to adapt to these

dynamically. This contrasts with most representation learning methods, supervised or self-supervised, which learn a

fixed embedding function and hence implicitly assume a single notion of similarity. For instance, models trained on ImageNet are biased towards object categories, while a user

might prefer the model to focus on colors, textures or specific elements in the scene. In this paper, we propose the

GeneCIS (‘genesis’) benchmark, which measures models’

ability to adapt to a range of similarity conditions. Extending prior work, our benchmark is designed for zeroshot evaluation only, and hence considers an open-set of

similarity conditions. We find that baselines from powerful

CLIP models struggle on GeneCIS and that performance on

the benchmark is only weakly correlated with ImageNet accuracy, suggesting that simply scaling existing methods is

not fruitful. We further propose a simple, scalable solution

based on automatically mining information from existing

image-caption datasets. We find our method offers a substantial boost over the baselines on GeneCIS, and further

improves zero-shot performance on related image retrieval

benchmarks. In fact, though evaluated zero-shot, our model

surpasses state-of-the-art supervised models on MIT-States.

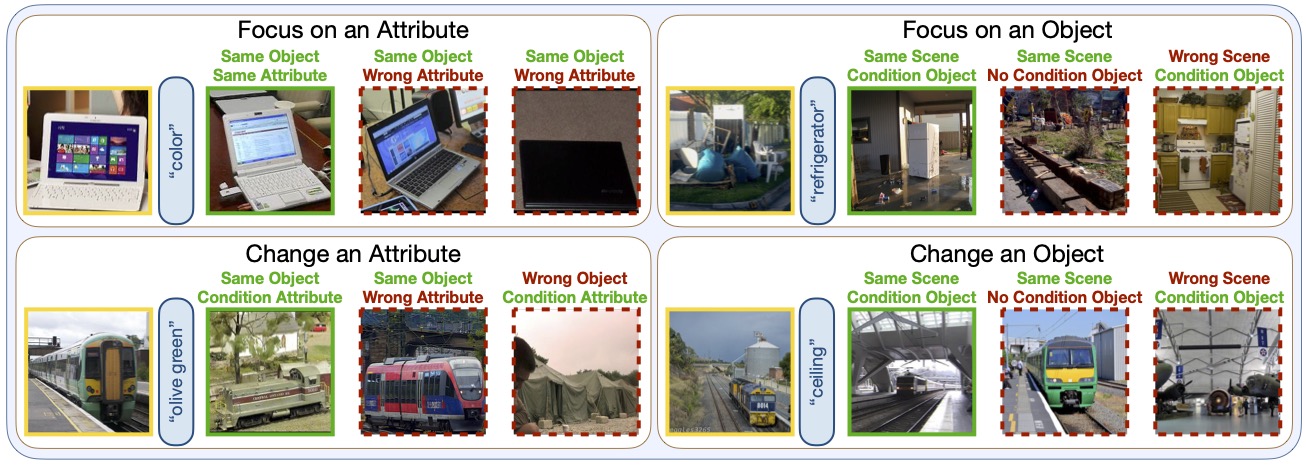

GeneCIS Examples

Our benchmark contains four conditional retrieval tasks.

Given a reference image (yellow boxes) and text condition (blue text), the model must select the only conditionally similar image (green boxes) from a curated set of gallery images.

The galleries contain hard distractor images (which are similar but not conditionally similar) to the reference image, to prevent shortcut solutions.

Citation

GeneCIS: A Benchmark for General Conditional Image Similarity

Sagar Vaze, Nicolas Carion, Ishan Misra

In CVPR, 2023.

@inproceedings{vaze2023gen,

title={GeneCIS: A Benchmark for General Conditional Image Similarity},

author={Sagar Vaze and Nicolas Carion and Ishan Misra},

booktitle={CVPR},

year={2023}

}

Acknowledgements

Sagar Vaze is funded by a Facebook AI Research Scholarship. This template was originally made by Phillip Isola and Richard Zhang for a colorful project, and inherits the modifications made by Jason Zhang. The code can be found here.